Multi-Cluster Orchestration of 5G Experimental Deployments within SLICES-RI over High-Speed Networking Fabric

Within SLICES-RI, a blueprint for a disaggregated real-time post-5G network architecture that can be deployed on commodity networking and computing equipment has been developed by the Working Group on post 5G infrastructures. This activity is part of the European SLICES-RI research infrastructure and constitutes a distributed experimental post-5G playground for academic research purposes to be deployed across several EU countries. The blueprint is meant to be reproducible and to evolve in a collaborative manner, and makes use of open-source solutions such as OpenAirInterface, K8s and others.

To facilitate truly large-scale and realistic experimentation, researchers require a variety of frequently highly heterogeneous high-capacity resources. The availability of such resources in research infrastructures exists on a global scale, but the problem is that these infrastructures are more isolated silos of resources than a unified research space. This results in a fragmented view of the total available resources and hinders the deployment of fully distributed large-scale applications. To address this challenge, it is necessary to integrate the various research facilities into a single one that provides an environment for the seamless and simple access to heterogeneous experimental resources from various sites and locations around the globe. In such a situation, a central hub is required for the management and orchestration of resources and experiments, while direct access to individual sites for the same operations is also required. To this aim, UTH team designed and implemented a fully-fledged multi-domain orchestration framework on top of the SLICES-RI post-5G blueprint, for providing centralized orchestration and fine-grained control of CNFs, VNFs and services over Kubernetes-based resource clusters, thereby facilitating the discovery and interconnection of services existing in distinct clusters.

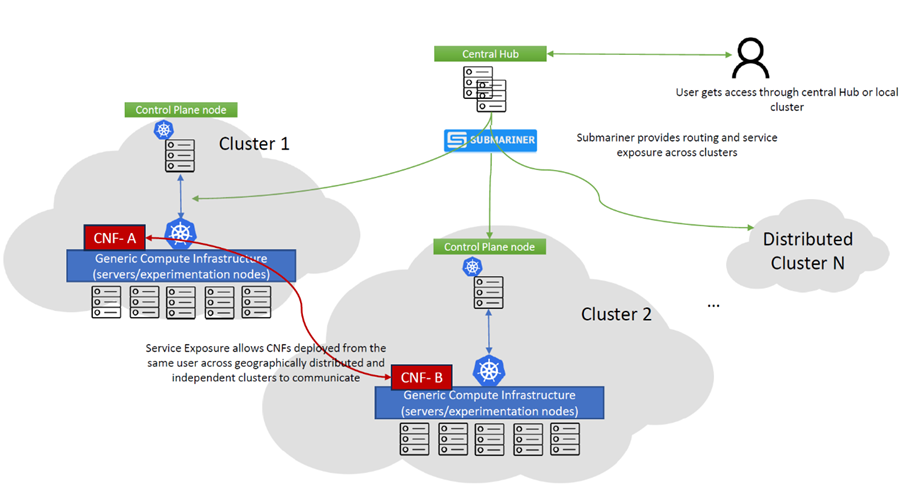

Figure 1: Overall Architecture integrating isolated islands under a single central hub

Figure 1 is a visual representation of the overall architecture under consideration. It is comprised of several independent research infrastructures, displayed as nodes, each of which represents a resource island. A container management system based on Kubernetes manages each cluster’s of resources directly. On top of the clusters a central hub exists, where a multi-domain orchestrator is deployed and directly interfaced with the individual Kubernetes-based clusters, enabling the deployment and management of containerized workloads across domains. The experimenters can login in from the central hub to a graphical user interface that allows them to inspect clusters and their worker nodes, deploy and configure pods and deployments, monitor the status of infrastructure and workloads, and define security and network policies. On top, SUSE Rancher was employed as the orchestrating framework for VNFs and CNFs. Rancher is a Kubernetes management tool that enables the deployment and management of clusters on any provider or location, as well as allowing the import of existing, distributed Kubernetes clusters that operate in different regions, cloud providers, or on-premises.

Even though the multi-domain orchestrator provides the ability to manage multiple containerized clusters deployed on different sites, it is not sufficient to deploy a workflow, or in our case, a 5G experimental network, across these clusters. The missing piece is network stitching that given multiple network subnets as input, will produce a single end-to-end network as output. For that purpose, the CNCF tool Submariner was used, that offers encrypted or unencrypted L3 cross-cluster connectivity, service exporting and discovery across clusters, and support for the interconnection of clusters with overlapping CIDRs.

Submariner consists of multiple components to ensure connectivity between clusters. The first of these components is the Gateway Engine, which is deployed in each cluster and which primary function is to create tunnels to all other clusters. The Gateway Engine supports three tunneling implementations: a) Libreswan, an IPsec VPN protocol; b) WireGuard, an additional VPN tunneling protocol; and c) unencrypted tunnels via VXLAN.

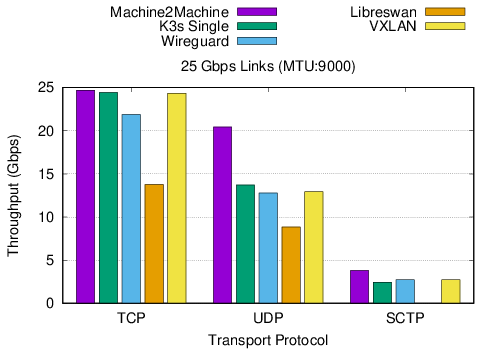

The performance of the different solutions was evaluated, for determining the optimal solution, when transferring traffic from different transport protocols. The results denote that the selection of the cable driver for the under-study Submariner framework is of the utmost importance, significantly impacting the performance of the workloads deployed on top. Based on the results we obtained, the VXLAN cable driver appears to outperform the other solutions, delivering performance as near as possible to that of the bare metal case. Nonetheless, VXLAN does not provide traffic encryption, hence it is not suitable for use in production-grade environments. Therefore, the next best option is to use the Wireguard driver, which offers encrypted tunnels and comparable performance.

Figure 2: Indicative performance results for high-speed links for different submariner configurations

- Syrigos, N. Makris, T. Korakis, “Multi-Cluster Orchestration of 5G Experimental Deployments in Kubernetes over High-Speed Fabric”, proc. of 2023 IEEE Globecom: Workshop on Communication and computing integrated networks and experimental future G platforms (GC 2023 Workshop -FutureGPlatform)